This page contains source code and datasets for our paper, Learning Invariant Features in Modulatory Networks Through Conflict and Ambiguity.

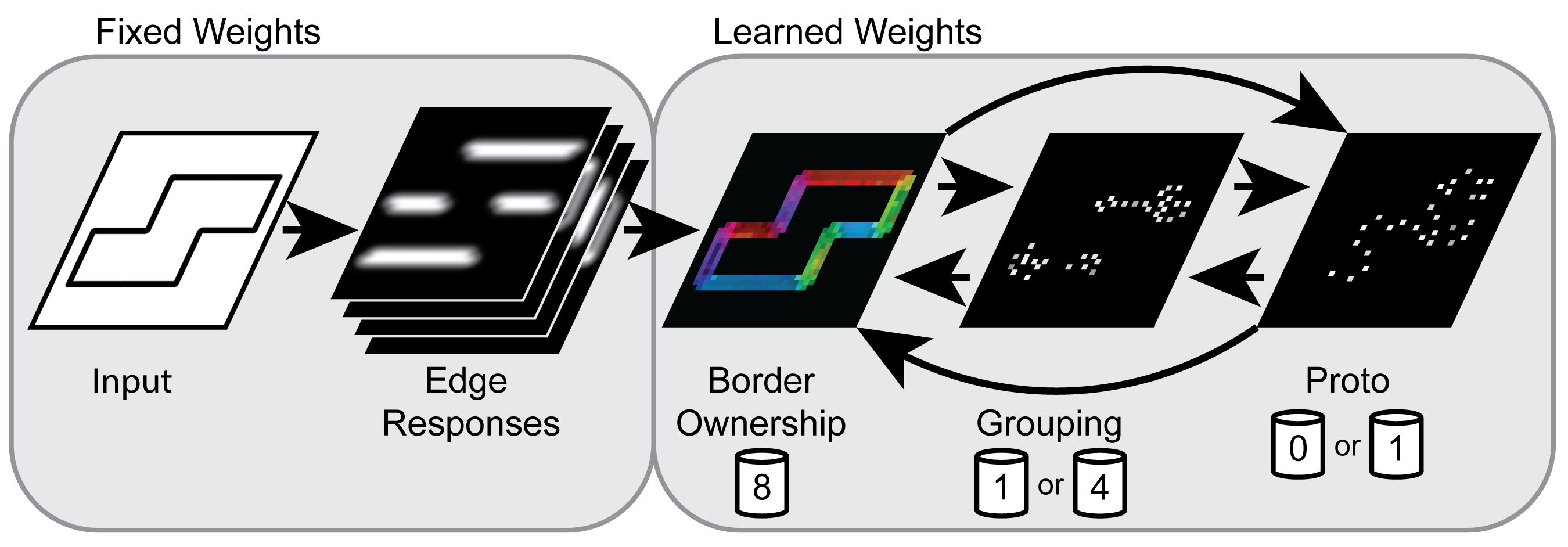

Although Hebbian learning has long been a key component in understanding neural plasticity, it has not yet been successful in modeling modulatory feedback connections, which make up a significant portion of connections in the brain. We develop a new learning rule designed around the complications of learning modulatory feedback and composed of three simple concepts grounded in physiological evidence. Using border ownership as a prototypical example, we show that a Hebbian learning rule fails to properly learn modulatory connections, while our proposed rule correctly learns a stimulus-driven model. Additionally, we show that the rule can be used as a drop-in replacement for a Hebbian learning rule to learn a biologically consistent model of orientation selectivity. This is the first time a border ownership network has been learned, accomplished while retaining the ability to learn even in contexts lacking modulatory connections, as the learned orientation selective network demonstrates. Our results predict that the mechanisms we use are likely crucial for learning modulatory connections in the brain and furthermore that modulatory connections cannot be learned prior to inhibition.

All experiments were run using code written in modern C++ (C++11 and newer) using a specifically developed toolkit for conflict learning. Analysis was performed using a combination of code written in C++, MATLAB, and python.

Experiments and analysis as reported in the paper were performed under Ubuntu 16.04. We provide no support for the code and cannot guarantee it working on any other system. The code requires a recent release of OpenCV 2.X as well as the serialization library cereal. Additionally, MATLAB is required to be installed. The code is designed to run in a multithreaded environment, using either Intel TBB or OpenMP (a CMAKE flag selects which to use). Please see the CMakeLists.txt configuration file for more details on requirements, and where to supply necessary directory information.

Once built, the C++ programs are all run in a similar fashion. The first command line argument provided must be a configuration file (see the config folder) that specifies information such as the number of CPU cores to use. If loading an already trained file (which will be saved with the .cereal extension), supply it as the second argument. If training, simply provide at least three total command line arguments (which can be anything after the path to the configuration file). Additional information can be found by reading the source code for the main programs. Main.C is the entry for the border ownership experiments.

The core logic for the learning rules can be found in the Neuron, BorderOwnershipNeuron, GroupingNeuron, and ProtoObjectNeuron files within the src directory. Activation dynamics are handled by each neuron's updateImpl function, while learnImpl has most of the learning logic. The propogateAccumulatedWeight function handles the short-long-term dynamics of conflict learning.

The best way to understand the source code is to start with an entry program, such as Main.C (for border ownership), and follow along as it proceeds through Border.C.

All source is provided on an 'as-is' basis with no warranty or support. The source code is licensed with a GPL v3 license. Please see the source for license details.

Source code: zipAll data, except for the natural images, is generared procedurally for each experiment. See the source code for details.

Natural image data is taken from a subset of the BSDS 500 dataset. Individual images are provided below.

Car: contour, ground truth