|

|

|

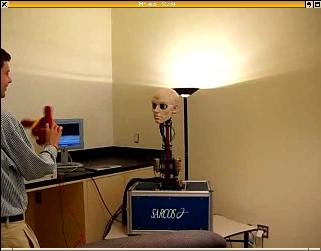

This is a simple demonstration which we put together with robotics experts from Stefan Schaal's laboratory for the Robot Exposition and Open House at the National Science Foundation on September 16, 2005. Zach Gossman, an undergraduate student at iLab, wrote the code for this demo.

A stripped-down version of our saliency-based visual attention code runs in real-time on a portable workstation with a single dual-core processor. Video inputs are digitized from cameras in the eyes of the robot and processed at 30 frames/s. Saliency maps are computed, highlighting in real-time which locations in the robot's surroundings would most strongly attract the attention of a typical human observer. At any given time, the coordinates of the most active locations are then transmitted to the robot head's motor control system, which then executes a combination of rapid (saccadic) eye movements and slower (smooth pursuit) eye and head movements.

The robot was highly successful at behaving in a very natural and credible way, even when the room was flooded with people during the open-house. Below are some videos.

|

|

| Movie 1 (2.0 Mb DiVX) | Movie 2 (2.2 Mb DivX) |

Here is a poster (13 Mb PDF) describing the project.

Check out this press coverage of the event by Wired News. Also see this funny coverage of the exhibit at Engadget, and more pictures at the Chinese People's Daily Online.

Also check this press coverage out:

Copyright © 2006 by the University of Southern California, iLab and Prof. Laurent Itti